I enjoyed talking to the thoughtful team at Beyond Capital about impact investing in education; this half-hour of audio was the result. https://www.beyondcapitalpodcast.com/blog/the-future-of-education

Category Archives: Uncategorized

Why I don’t like the idea of “Netflix for Education”

So many companies seem to be selling themselves as the “Netflix for Education” — perhaps most notably, Pearson in a recent article. It’s an idea I find depressing.

It’s easy to see why, on first glance, this is such an appealing elevator pitch.

For the learner and teacher, education as:

- personalised;

- drawing on a huge range of materials which are often of very high production values;

- allowing you to go at your own pace;

- hugely enjoyable.

For the investor, a business which is:

- clearly scaleable across the world;

- reusing content with light localisation;

- using AI to reinforce its network effect;

- deploying a subscription business model with recurring revenues.

What’s not to like?

Well…

Unlike Netflix, the complicated business of teaching and learning is:

- collaborative (between teachers and students, students and students, teachers and teachers, and more, in multiple complex ways…) — whether this is just learning algebra in a classroom or practising key twenty-first century skills such as working in teams, empathy or critical thinking;

- deeply contextual: teachers judge what’s best to do next in a given moment using a wide range of very human inputs, many of which computers won’t be able accurately to assess any time soon. It’s not just about what has been chosen in the past —it’s about things like has it been hot today? or did that child eat breakfast this morning? or Jill is upset after that row in the playground;

- active not passive — to coin an old phrase comparing the internet and television, “lean forward” not “lean back”;

- sometimes inevitable, grinding hard work (however hard we try, learning Mandarin characters and sounds is just difficult and dull);

- often profoundly un-digital, involving practical messy work with the hands, or outdoor investigations, or physical activity like sport, drama or music.

I guess I feel the whole idea diminishes what I feel education is really about. And, incidentally, the scope of how Pearson and others might deliver impact, revenue and profit. A shame.

Photo credit: “South Carolina First Steps 4K students recite the alphabet in class at the Child Development Center at Shaw Air Force Base, S.C., May 31, 2018. The children learned through play, structured lessons and social interactions in preparation for further schooling. (U.S. Air Force photo by Airman 1st Class Kathryn R.C. Reaves)”

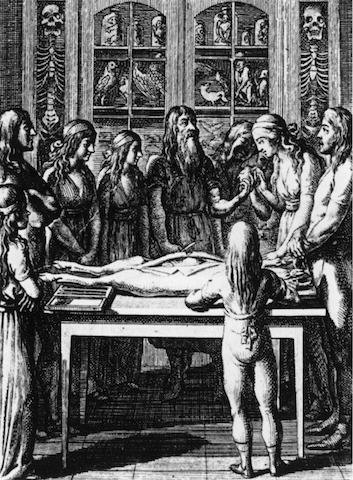

Treat with care

Perhaps it’s because I have needed to consult doctors and nurses recently. Perhaps it’s because there is one over-simplification about education that annoys me more than most others – the meme which unfavourably compares the relative use of technology in operating theatres and classrooms now and a hundred years ago. Perhaps it’s because I’m spending a lot of time working with the medically trained founders of adaptive learning company Area9 at the moment. But the use of medical language in the debates around the future of education seems to be growing, and doesn’t seem to be questioned very much. Whilst the analogy can sometimes be helpful, it can also be lazy, misleading and counter-productive – both as we think about teaching and learning, and about medicine.

One area where the medical comparisons are useful is when talking about the value of professional training. Most researchers agree with the not-exactly-controversial idea that teachers are the single most important ingredient in student success. It’s hard to argue differently about doctors and nurses in the treatment of patients. Yet in the medical world, continuing professional development is not only regarded as common sense, but in many countries it is a legal requirement. In formal education, not so much. A failure to provide resources, incentives and career pathways which recognise teachers as reflective professionals is, in my view, a glaring failure of many education systems around the world.

It also means we aren’t widely gathering evidence and reflecting on it to make things better. As Michael Feldstein recently wrote:

“…effective ed tech cannot evolve without a trained profession of self-consciously empirical educators any more than effective medication could have evolved without a profession of self-consciously empirical physicians”

Ah, “Researchers”. “Student success”. “Empirical”. Michael and I are of course adopting the language of “learning science” – the fraught and freighted sphere of establishing “what works” in education. And this is where the problems start.

Being empirical is relatively easy in medicine. People can be scientifically tested for the presence of ailments, diseases, pathogens and “abnormalities” within reasonable, globally agreed levels of probability. To caricature, you’ve got tuberculosis or you haven’t. Once the drugs have been administered, doctors and their managers can agree on whether or not the illness has gone.

Classrooms aren’t operating theatres. As I have written before, education is a world of values, subjectivity, and Politics (big “P” deliberate). Whilst most of the world can probably agree on whether or not three individual children from England, China and India can solve quadratic equations effectively, views on the “right” history of Hong Kong under the British Empire might be highly divergent. It’s very difficult to establish a definition of “success” here without acknowledging the (inevitable and necessary) value judgements which are involved. Which means a universal definition of “empirical” is often impossible. We all need to be honest about this, and devise intelligent ways to deal with it.

There’s also a more detailed debate about medical research methodologies being applied in education, thoughtfully covered by the UK National Foundation for Educational Research here, so I won’t go into detail. Whilst a randomised controlled trial (RCT) is the gold standard in the world of medicine, it seems it isn’t necessarily always the right way forward in education.

More interesting, perhaps, is a linked broader issue. RCTs aim to find drugs and processes which make people better – medicine, at least in conventional Western circles, is mostly a business of repair. In classrooms or educational systems, we probably shouldn’t be “fixing” people. We should be nurturing them to fulfil their unique potential and giving them tools for life (note the Western liberal assumptions here, naturally).

The description of some educational software as “interventions” is almost militaristic – implying a dramatic break with a present which is unsatisfactory. When governments and thought leaders start talking about education as “broken”, there are worrying implications of retrospective conservatism, rather than creative, hopeful imagination. When I’ve worked on successful educational resources, the process has been one of co-creation and discussion – something done with teachers, institutions, researchers and learners, rather than to them. Medical metaphors take us down a troubling road here, and it could be argued that the top-down “intervention” approach to improving education has seen some notable failures (inBloom’s collapse or Zuckerberg’s doomed initiative in Newark being good examples).

Then again, many major healthcare systems are now adopting the idea that theirs is not just a work of treating problems as they arrive. Living well, for a long period of time, can be seen as a partnership between medical researchers and the evidence they provide, trained reflective professionals acting as advisors and deploying the researched resources at their disposal, and patients taking responsibility for their own choices. A reflective, comparative discussion between health and education – about what they do and how they describe it – seems ever more fruitful.

Image: Engraving by Daniel Chodowiecki from Franz Heinrich Ziegenhagen, Lehre vom richtigen Verhältnis zu den Schöpfungswerken und die durch öffentliche Einführung desselben allein zu bewürkende algemeine Menschenbeglükkung (1792). Wikimedia Commons/Public Domain.

When I was asked to write about good uses of technology in education…

…I passionately felt that there was a more important question to answer. Post-Brexit and post-Trump, a thousand words came out in two hours. Quite understandably, the publication that asked me to pitch an article to them rejected what I sent. So here it is.

After two decades spent at the intersection of education, digital technology and commerce, I have never felt such mixed emotions. I am disappointed at how little has changed; accepting of how naïve I was when I started; and never more determined to engage with the complex, essential and exciting challenge of what I feel needs to be done.

We need to be frank. Despite widespread excitement and rhetoric, digital learning has so far shown few clear gains to societies around the world. School systems have not been transformed, curricula remain broadly unchanged, and in a number of countries (including the UK, my home) digital initiatives have withered. The evidence for “what works” is disputed and thin. Children are leaving educational systems ill-equipped for the new realities of globalisation, and many adults are unable to re-tool themselves as the world changes around them.

For sure, there are some contexts in which the use of technology has gained adoption. These are frequently those in which there are clear crises of value and low-cost gaps which need to be filled. In US Higher Education, online learning is slowly but firmly moving into the mainstream, not least because of major demographic changes in the student body, and the widespread questioning of the cost/benefit of a traditional four-year residential degree. Alongside universities offering online degrees we are seeing the rise of the coding bootcamp – both virtual and physical – which foregrounds practical digital skills. In language learning, “good enough” solutions have emerged – whilst a free app with no human interaction is highly unlikely to make you fluent in English, it may well give you enough to improve your job prospects. Digital test preparation tools and services for high-stakes public exams are mushrooming, with particularly substantial investment going to providers in India and China. Especially in Africa, interesting but controversial experiments are under way to raise education levels with low-cost private schools facilitated by technology.

Yet all of these so far feel like tinkering rather than the unleashing of dramatic potential. In developed economies, digital pedagogy is often explored by teachers in spite of the systems in which they are operating. Throughout the world, programmes to introduce technology in schools come and go, frequently driven by political whim rather than evidence and best practice, often losing funding before there is a chance to embed new ways of working. Teachers and systems resist change, for entirely understandable reasons – they don’t want to risk their children’s futures or their careers (both frequently based on unchanged traditional public examination results), and they have seen so many initiatives come and go that cynicism has set in. Not everyone is instantly comfortable with new technology, and the support and infrastructure they need to engage with it is rarely resourced properly. Educators are rarely given the space to develop as reflective professionals. There is a lack of patient, incremental capital which allows companies to grow at the pace of discursive educational change, rather than at the pace of Uber.

Technology still holds immense potential for learning. It holds out the opportunities of greater personalisation, more exciting resources, wider choices, and better evidence with consequent improved practice. More importantly perhaps, we need to reframe the issue: learning requires technology as our world becomes deeply digital. Our working tools are increasingly electronic. All of us need to understand the underpinnings (and underbelly) of the technology-driven societies we call our own, in the same way as most of us have long agreed that children need to know the basics of science. We have to know what we are dealing with, and know how to question it.

So – how do we make progress?

The problem here is agreeing what we want progress to be.

Education is frequently presented as an amoral scientific endeavour, particularly by technology companies who have solutions to sell. Learning algorithms will find the best pathways for our children. Teachers with more data will make better decisions. Virtual reality will bring History alive in unprecedented ways. Mobile devices will let us learn anything, anywhere.

Yet education is fundamentally, unavoidably, always about values. Algorithms are programmed by humans who decide on their priorities. Data is useless without filtering and interrogation. At its best, the study of History is learning a process of weighing evidence to find a provisional, consciously incomplete idea of the past – at its worst, a political endeavour to reinforce power. Content and data on mobile devices is mediated by powerful companies with agendas which may not always match our own.

In these days of division, fear and uncertainty, somehow we need to agree on an agenda for education in our various countries which serves to cement what holds us together rather than grow fissures into chasms. Technology can be a vital part of this. We have an extraordinary new ability to talk to and see people in the next community, or furthest country, at tiny cost. We may be able to develop new ways to assess and understand people’s abilities and potential, and help them develop the attitudes and skills which give them the best chance in life. It may even be possible more quickly to find them jobs that they can do wherever they find themselves, or wherever they want to move to, giving them the self-esteem and confidence which comes from a stable, sufficient income. We can bring unprecedented deep and diverse experiences – of people and environments – to the learning process.

Those of us who have positions of possibility, knowledge and power within the world of education face a new imperative to articulate how technology is no more and no less than a tool in the service of what we want our children – and ourselves, and our fellow citizens – to experience. We need to talk about aims and values first and evaluate our educational tools, systems, practices and people (including ourselves) against them. We need to acknowledge the inevitable moral angle of our work and argue, in the spirit of honest dialogue, for what we believe in.

As ever, references are available if you contact me via Twitter @nkind88

Why we need to predict the futures, plural

It’s been a bad year for those paid to predict. This week has shown that perhaps more than any other. The pollsters and pundits have failed again to call the way in which people will vote, meaning for the second electoral shock of 2016 – after Brexit, Trump. As someone who has just stopped being paid to think about the future for a world-leading media group, I’m intrigued. As someone who is hopefully about to start helping at least one significant organisation to develop its future strategy, I’m daunted. And as someone who hopes regularly to deconstruct ideas about the future of education for investors and decision makers, I am unsurprised. (As a citizen of the world, both events sadden me greatly, but that’s in passing).

The educational technology writer Audrey Watters regularly points out how we need to recognise that some venture capital firms, corporations and others create stories about the future to further their own agendas, and often dress them up as unassailable fact. She is essential reading for anyone making thoughtful strategy in education, and her latest, characteristically acerbic piece was published last week and persuasively skewered a number of well-known and influential organisations offering such predictions.

Yet as I read Audrey’s post I felt there was more to say, with more general application. There are times when we have to predict the future, and sometimes that has to be public. If you have the responsibility of allocating resources – for example, making decisions about where to invest money or cut jobs – I would argue you have a duty to do so having thought carefully about what might happen in the next months or years. If you have public shareholders and/or accountability, you may have to justify those choices openly.

The problems come when story becomes presented as fact, and complexity is ignored for the sake of the story. For example, personalised learning technologies hold great promise theoretically, but are substantially unproven and likely to be very context-specific. They are not “a magic pill”, but that sounds a lot better and more confident to many people.

The way through, I think, is to be humble. One way to do this is to hold multiple futures as hypotheses simultaneously and to acknowledge that none of them are likely to be entirely accurate, but all may have elements of truth in them. Those hypothetical futures should be rooted in as much evidence about the trends leading to them as you can practically obtain, and perhaps by examining the motives and likely actions of key players as events unfold. This often involves looking for data which isn’t easily available. In other words, tell multiple stories and critically compare and contrast them.

If you can explore the implications of a number of possible futures for your decision, it will be better – and the next decisions as the world changes (unexpectedly) should be better too. Additionally – and importantly – you may be able to explore the unintended consequences of your possible paths of action. Sometimes the best intentions lead to the worst of outcomes, as many politicians have found in 2016.

As Audrey points out, the future is not inevitable. We make decisions which make things happen. If we acknowledge that predictions about the future are not only stories but also more or less likely possibilities, we are better placed to evaluate the probability and desirability of those possibilities. Then, we can debate and aim for the right ones. Given the times we are in, this seems more necessary than ever.

Image credit: https://www.flickr.com/photos/39908901@N06/22606265795 (CC BY SA 2.0)

As ever, links and references available on request.

Back in the blogosphere

I’m delighted to say that I am back in the blogosphere after a long absence – with a new site, the same interest in education, business and innovation worldwide, and a new mission around impact investing and better capitalism. Watch this space for insights and comment on the business of education, education technology and investing & innovating for good.

…and other things that have been preoccupying me…

Other things have been preoccupying me too – not least how to get the least worst balance between work, self and family. I could try and put down my thoughts on this, but it’s all been much better expressed and researched by Gaby Hinsliff on her blog and in her book (necessary disclosure: Gaby and I worked on the university newspaper together 20 years ago, and I enjoyed seeing her again at a party recently where we talked about all this). It’s succinct and very well written, and pretty well sums up the dilemmas my wife and I seem to face most days. Worth investing the time in, particularly for fortysomething parents.

A year of silence…

I know I haven’t posted for a year. There’s a reason for this. I have been doing a lot of thinking and learning about digital education – more than ever before – but it has all been under the auspices of my day job. Which means that I can’t share it publicly, as my contract is pretty draconian on that front. Sorry!

Educational in a different sense

I’d recommend my sister in law Emily’s blog about her current experiences in a rural region of Zambia. Her writing is eloquent and precise, and I want to know the next episode in the real-life, real-time story of her subject. It’s here:

Eton College, and what we can learn from it

Apparently Eton College is going to start up a school for less wealthy pupils – but this report does come from the Daily Mail, my least favourite newspaper, so treat with caution. And yet again, I hear that somebody at a conference is telling the world how much the English state school system can learn from one of the world’s most famous schools. I couldn’t make the Learning without Frontiers conference last month – so please correct me if I don’t quite get this right – but apparently a teacher stood up and lauded the 571-year-old public school for not having put any interactive whiteboards in its classrooms and for continuing to teach in a “traditional” way. I have attended other educational conferences which featured a lot of talk about why Old Etonians are allegedly so successful, and a similar questioning of “modern” curricula and educational approaches. Doubtless it’s because of the fact that our current British Prime Minister attended the school, and because there is a strong argument that the private school system still dominates the elite in Britain: Eton remains a fascination.

It’s not something I tend to mention very much – and never at conferences – but I went to Eton. So whenever this subject comes up in a public gathering I sigh inwardly and bite my tongue. It’s all too easy to be pigeon-holed as the posh, naïve, over-privileged Old Etonian, and I never feel that there is enough time to bore everybody with the detail of why everybody’s asking the wrong questions and not getting into the complexities of what might (and might not) be learned from possibly the most famous school in the world. So I’m finally getting it off my chest.

Eton is a bizarre, sometimes wonderful and sometimes weird, often brilliant and frequently ridiculous, resolutely unique, educational institution. I loved and hated it simultaneously, for many reasons, some personal, some philosophical, and some blindingly obvious.

The quality of its education – which was so inspirational it made me want to work in education for the remainder of my life – was grounded in some very basic and obvious facts and a lot of common sense principles.

The facts were that the school was highly selective and embarrassingly well resourced with brilliant teachers and world class facilities. This makes teaching and learning very easy, relatively speaking. It’s easy to love Mediaeval history if you are taught by an inspirational Fellow of All Souls, Oxford who treats you as if you are an undergraduate, or to have a lifelong passion for ceramics if your teacher exhibits his pots to critical acclaim in London and New York. I can’t speak from experience, but it must be a lot more straightforward to teach a class of 15 motivated, “posh” 17-year-olds picked for their intelligence than a group of 30 ill-at-ease and undervalued students in a poor (in both senses) comprehensive.

Eton’s overriding principles were to focus on the individual and make him (for there were, regrettably, only “hims”) feel that once he has been helped to find what he is best at and passionate about, whatever it is, there is no reason whatsoever that he shouldn’t be the best at it in the world. Simple but effective – and the snowball effect of saying “well, look at Jonathan Porritt/ Ranulph Fiennes/ Hugh Fearnley-Whittingstall, he did” (and yes, all right, David Cameron) – cannot be underestimated. This wasn’t narrow academic aspiration or box-ticking, but about being pushed to do something exceptional – in whatever field was your chosen one. Learning was in itself the best thing you could do, and continue to do for the rest of your life. I still remember my “A” level English teacher explicitly telling us that the first part of the year would be about how to pass the exam – and then we would get on to the interesting bits.

So – Eton didn’t cram you with much that was out of date. It was never relentlessly focussed on force-feeding you with the speeches of Churchill or the countries of the British Empire. Intelligent questioning was always much more interesting than dull acceptance. Marx was studied, in depth if you wished (so was American blues music). Amazingly, inside the school confines class and social status was relatively unimportant (though this changed dramatically in terms of social arrangements during the holidays – I got a scholarship, so my parents were nowhere near the income bracket and/or Burke’s Peerage listings of many of my contemporaries’, and I frequently felt “unposh” in this respect). Whilst discipline was necessarily present (usually quietly so), there was no corporal punishment and never humiliation from teachers. Whilst the place was deeply traditional in many ways the tradition was often there for a purpose (although I maintain, as I did at school, that the uniform is loony and some of the team games deeply replaceable).

Perhaps most significantly the school was excellent at teaching twenty-first century skills, utterly in line with the way in which many think the curriculum should now develop. I learnt to speak and perform in public; to work in teams; to solve problems; to think creatively; and to learn how to learn, without ever knowing that I was doing so. We learnt these skills via “traditional” methods: by performing plays, in playing team games, by being academically stretched, through other extra-curricular activities, through our teachers’ passion for learning and by constant challenging of our intellects. All of these “twenty-first century” concepts were so ingrained in the everyday experience, and had been for generations, that they just seemed obvious. It illustrates how the whole concept of “traditional” is deeply suspect in this context.

Which brings me back to technology. I suspect that every classroom at Eton doesn’t have an interactive whiteboard because they haven’t been judged to be the most cost-effective use of technology. (If this is the rationale, I’m afraid I would agree.) But – and you only need read the College’s website to find this out – all students are obliged to take a laptop to school with them and can use the Ethernet connection which they will find in their room. Every single student has their own room at Eton, and every single one is connected to the internet and to the college’s own intranet.

I quote http://www.etoncollege.com/ComputerSpecifications.aspx complete with its Eton jargon (“F” block is the name for the first year of the school):

Computing forms an important part of the curriculum for new boys in F block. Almost all school events are advertised via email and internal websites, and a boy will use his computer daily for work and administration.

With of the growing use of IT in the curriculum and the need to ensure effective technical support for boys throughout the school, we have defined a minimum specification for boys’ computers.

Technology is there, but it’s been quietly and quickly incorporated into the fabric of the school where it’s useful and sensible.

Eton was not perfect by any stretch when I was there. It was particularly bad at training you to deal with what happens when things irredeemably don’t work out, whether it’s your fault, other people’s, or nobody’s. It had a pretty patronising and narrow view of the world of business. It undoubtedly has some unpleasant former pupils. It is hugely unfair that only 1200-odd pupils have access to such an experience at any one time, at a cost prohibitive for nearly everybody. Nevertheless, it is of course a hugely successful institution.

So what I always want to say at conferences is the following:

- What success there is, is not about looking backwards to nostalgic notions of how education used to be. It’s about a combination of quality teachers, immense confidence, and high aspirations combined with pupil selection and amazing resources. Tradition is either motivational window-dressing or serves a sensible purpose, and is always questioned for its alignment with the values of the school.

- I hope that quality teachers, immense confidence, and high aspirations are transferable into every school around the world.

- I know – regrettably – that the resourcing is not, and selection is a deeply charged issue (which I’m not going to engage with now).

- The interesting unanswered question is the relative importance and interdependence of these factors in creating a successful school. I hope and feel that resourcing and selection are actually the least important of them. What do you think?